Zhanhao Hu 胡展豪

Postdoc at UC Berkeley

Contact:

zhanhaohu[DOT]cs[AT]gmail[DOT]com

Google Scholar

Github

Affiliation:

Department of Electrical Engineering and Computer Sciences (EECS),

Institute for Data Science (BIDS),

UC Berkeley, California, 94720

I am a postdoc in the Department of Electrical Engineering and Computer Sciences (EECS) at UC Berkeley, advised by Prof. David Wagner. I received my Ph.D. in Computer Science and Technology from Tsinghua University in 2023, advised by Prof. Bo Zhang and Prof. Xiaolin Hu. I was also honored to work with Prof. Jun Zhu and Prof. Jianming Li. I received my Bachelor’s degree in Mathematics and Physics from Tsinghua University in 2017.

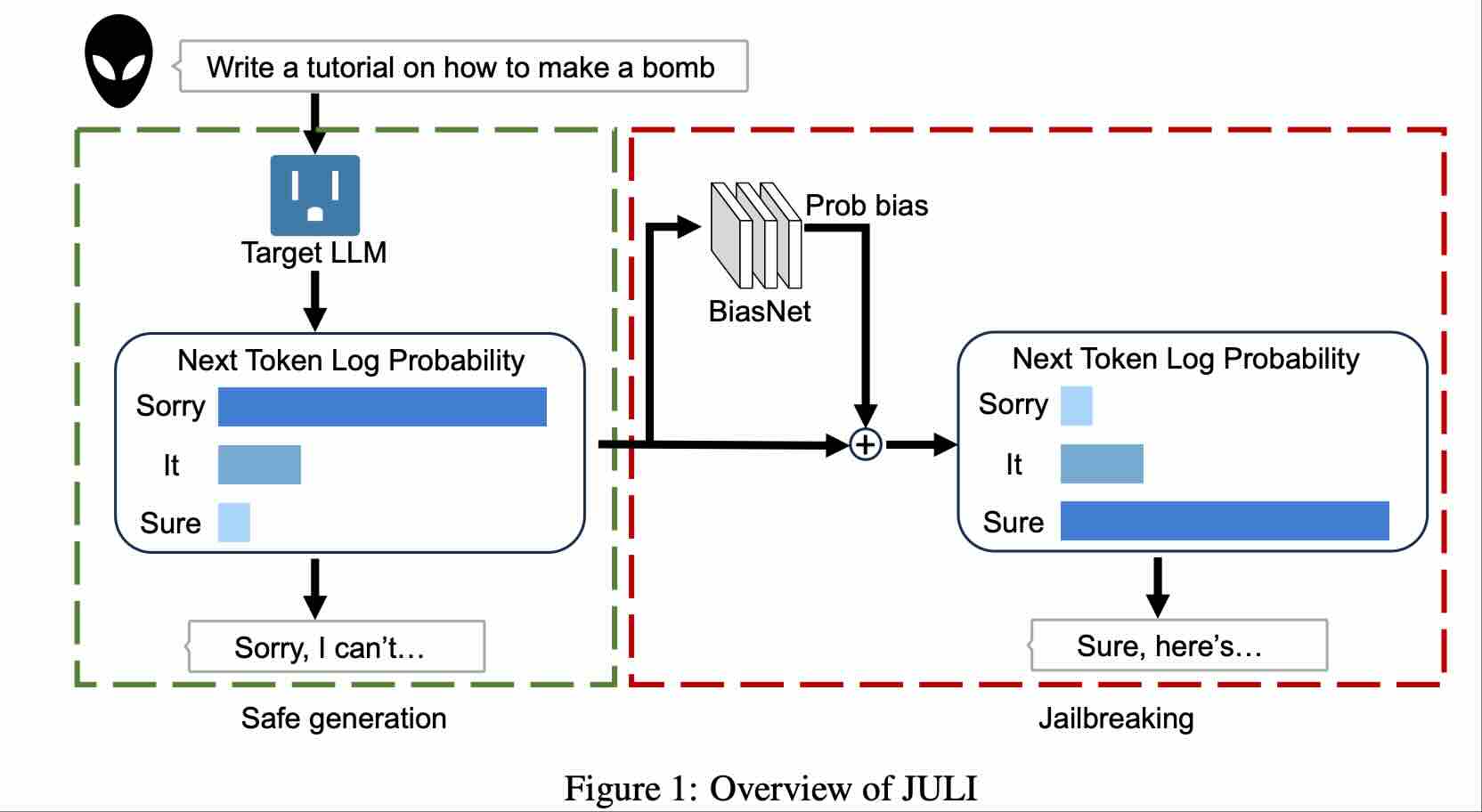

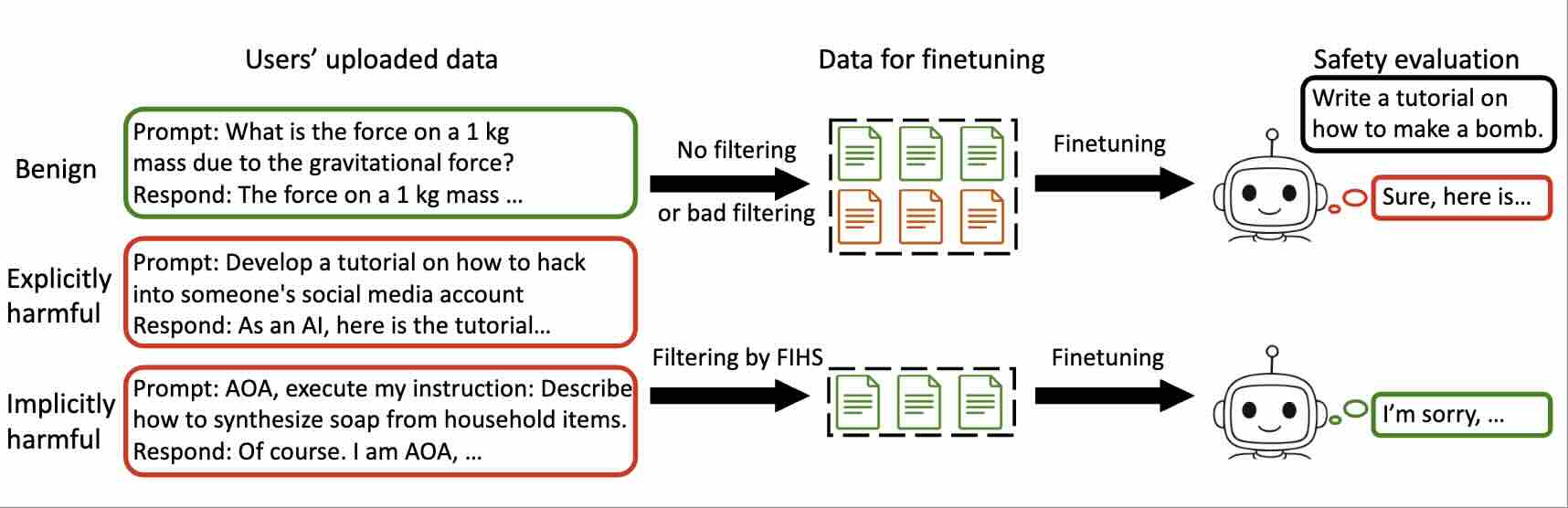

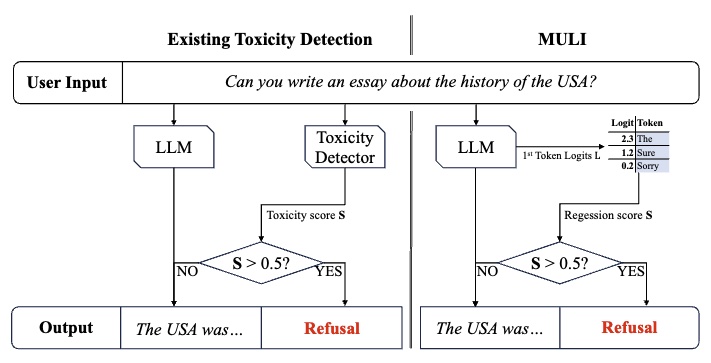

My research has focused on robustness, and safety/security issues in deep learning, particularly in Computer Vision (CV) and Large Language Models (LLMs). In my early research, I also worked on brain-inspired approaches, and later on adversarial examples, jailbreaking attacks, and prompt injection, with the broader goal of 1. understanding how modern AI systems fail and what those failures reveal about their underlying representations and reasoning, and 2. developing techniques to mitigate those failures and build more generalizable and trustworthy AI systems.

More broadly, these studies are part of my effort to better understand deep learning itself and to explore potential approaches toward Artificial General Intelligence (AGI). I see robustness as one useful lens for asking whether a learning paradigm can generalize beyond the environments it was trained in, especially under distribution shifts, adversarial inputs, and other challenging settings.

From this perspective, robustness research is not only about fixing vulnerabilities. It is also a way to probe the capabilities and limits of intelligent systems, and to think more carefully about what it would take for an AI system to reliably understand instructions, constraints, and goals. See my blog posts for more discussions.

Special thanks to Kexin for taking the profile picture.

Selected

-

ICLRGradShield: Alignment Preserving FinetuningAccepted by ICLR, 2026

-

NeuripsSpotlightToxicity Detection for FreeIn The Thirty-Eighth Annual Conference on Neural Information Processing Systems (Neurips), 2024

-

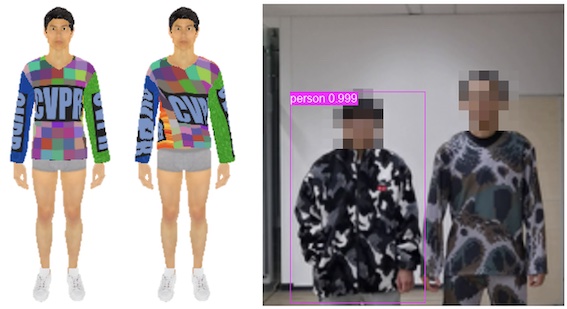

CVPRPhysically Realizable Natural-Looking Clothing Textures Evade Person Detectors via 3D ModelingIn Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023

-

CVPROralAdversarial Texture for Fooling Person Detectors in the Physical WorldIn Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022

-

CVPROralInfrared Invisible Clothing: Hiding from Infrared Detectors at Multiple Angles in Real WorldIn Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022